AI视觉传感器#

介绍#

The aivision class is used to control and access data from the V5 AI Vision Sensor. The AI Vision Sensor can detect:

AI分类(例如游戏对象)

AprilTags IDs

自定义颜色签名

自定义颜色代码

它提供对象数据,包括位置、大小、方向、分类 ID 和置信度分数。

The sensor processes visual information using an onboard AI model selected in the AI Vision Utility within VEXcode. The selected model determines which AI Classifications the sensor can detect. When using VS Code, the AI model must first be configured in VEXcode before it can be used in your program. Detected objects are returned through the objects array after takeSnapshot is called.

类构造函数#

aivision(

int32_t index,

Args&... sigs );

参数#

范围 |

类型 |

描述 |

|---|---|---|

|

|

The Smart Port that the AI Vision Sensor is connected to, written as |

|

|

One or more detection types to register with the sensor:

|

人工智能模型和分类#

AI视觉传感器能够通过特定的AI分类来检测不同的物体。根据在“设备”窗口中配置AI视觉传感器时选择的AI分类模型,可以检测到不同的物体。目前可用的模型包括:

课堂要素

身份证号码 |

人工智能分类 |

|---|---|

0 |

|

1 |

|

2 |

|

3 |

|

4 |

|

5 |

|

6 |

|

7 |

|

8 |

|

V5RC 高风险赛事

身份证号码 |

人工智能分类 |

|---|---|

0 |

|

1 |

|

2 |

|

V5RC 推力反馈

身份证号码 |

人工智能分类 |

|---|---|

0 |

|

1 |

|

示例#

// Create Color Signatures

aivision::colordesc AIVision1__greenBox(

1, // index

85, // red

149, // green

46, // blue

23, // hangle

0.23 ); // hdsat

aivision::colordesc AIVision1__blueBox(

2, // index

77, // red

135, // green

125, // blue

27, // hangle

0.29 ); // hdsat

// Create a Color Code from two color signatures

aivision::codedesc AIVision1__greenBlue(

1, // code index

AIVision1__greenBox, // first color signature

AIVision1__blueBox ); // second color signature

// Create the AI Vision Sensor instance

aivision AIVision1(

PORT11, // Smart Port

AIVision1__greenBlue, // color code

aivision::ALL_AIOBJS ); // enable AI Classifications

成员功能#

The aivision class includes the following member functions:

takeSnapshot— Captures data for a specific Color Signature, Color Code, AI Classification group, or AprilTag group.installed— Returns whether the AI Vision Sensor is connected to the V5 Brain.

To access detected object data after calling takeSnapshot, use the available Properties.

Before calling any aivision member functions, an aivision instance must be created, as shown below:

/* This constructor is required when using VS Code.

AI Vision Sensor configuration is generated automatically

in VEXcode using the Device Menu. Replace the values

as needed. */

// Create Color Signatures

aivision::colordesc AIVision1__greenBox(

1, // index

85, // red

149, // green

46, // blue

23, // hangle

0.23 ); // hdsat

aivision::colordesc AIVision1__blueBox(

2, // index

77, // red

135, // green

125, // blue

27, // hangle

0.29 ); // hdsat

// Create a Color Code from two color signatures

aivision::codedesc AIVision1__greenBlue(

1, // code index

AIVision1__greenBox, // first color signature

AIVision1__blueBox ); // second color signature

// Create the AI Vision Sensor instance

aivision AIVision1(

PORT1, // Smart Port

AIVision1__greenBlue, // color code

aivision::ALL_AIOBJS ); // enable AI Classifications

takeSnapshot#

Captures an image from the AI Vision Sensor, processes it using the selected AI model or configured color signatures, and updates the objects array.

Each call refreshes the objects array with the most recent detection results. Objects are ordered from largest to smallest (by width), beginning at index 0. If no objects are detected objectCount will be 0 and objects[i].exists will be false.

1 — 使用对象 ID 和对象类型拍摄快照。

int32_t takeSnapshot( uint32_t id, objectType type, uint32_t count );

2 — 使用 颜色签名 拍摄快照。

int32_t takeSnapshot( const colordesc &desc, int32_t count = 8 );

3 — 使用 颜色代码 拍摄快照。

int32_t takeSnapshot( const codedesc &desc, int32_t count = 8 );

4 — 使用 AprilTag ID 拍摄快照。

int32_t takeSnapshot( const tagdesc &desc, int32_t count = 8 );

5 — 使用 AI 分类 拍摄快照。

int32_t takeSnapshot( const aiobjdesc &desc, int32_t count = 8 );

Parameters6 — 使用对象描述符拍摄快照。

int32_t takeSnapshot( const objdesc &desc, int32_t count = 8 );

范围 |

类型 |

描述 |

|---|---|---|

|

|

The identifier of the object to detect when using the

Note: In VEXcode, AI Classification names (such as blueBall) may be used directly. In VS Code, the numeric ID must be used. |

|

|

Specifies the category of object associated with

|

|

|

Descriptor used to detect a specific object. Passed directly to

|

|

|

从快照中存储的最大对象数。默认为 8。 |

返回值

Returns an int32_t representing the number of detected objects matching the specified signature or detection type.

条笔记

AI视觉传感器必须先拍摄快照才能访问物体数据。

The

objectsarray is refreshed on every call.When

countis specified, only the largest detected objects (up to the specified amount) are stored.

AI 分类取决于在 VEXcode 的 AI 视觉实用程序中选择的模型。

示例

// Move forward if an object is detected

while (true) {

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

Drivetrain.driveFor(forward, 50, mm);

}

wait(50, msec);

}

installed#

返回 AI 视觉传感器是否已连接到 V5 大脑。

可用功能

bool installed();

参数

此函数不接受任何参数。

返回值

返回一个布尔值,指示 AI 视觉传感器是否已连接:

true— The AI Vision Sensor is connected.false— The AI Vision Sensor is not connected.

// Display a message if the AI Vision Sensor is connected

if (AIVision1.installed()){

Brain.Screen.print("Installed!");

}

特性#

Calling takeSnapshot updates the AI Vision Sensor’s detection results. Each snapshot refreshes the objects array, which contains detected objects for the requested AI Classification, Color Signature, Color Code, or AprilTag ID.

AI Vision data is accessed through properties of objects stored in AIVisionSensor.objects[index], where index begins at 0.

物体按面积从大到小排序。

AI视觉传感器图像分辨率为 320 × 240像素物体位置和大小值以像素单位表示,相对于传感器的当前视图。

以下房源可供选择:

objectCount— Returns the number of detected objects from the most recent snapshot.largestObject— Selects the largest detected object from the most recent snapshot.objects— Array containing detected objects updated bytakeSnapshot..exists— Whether the object entry contains valid data..width— Width of the detected object in pixels..height— Height of the detected object in pixels..centerX— X position of the object’s center in pixels..centerY— Y position of the object’s center in pixels..originX— X position of the object’s top-left corner in pixels..originY— Y position of the object’s top-left corner in pixels..angle— Orientation of a Color Code or AprilTag ID in degrees..id— Classification ID or AprilTag ID..score— Confidence score for AI Classifications.

objectCount#

objectCount returns the number of items inside the objects array as an integer.

AIVisionSensor.objectCount

成分 |

描述 |

|---|---|

|

您的AI视觉传感器实例的名称。 |

示例

// Display the number of detected objects

while (true) {

Brain.Screen.setCursor(1, 1);

Brain.Screen.clearLine(1);

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

Brain.Screen.print("%d", AIVision1.objectCount);

}

wait(50, msec);

}

largestObject#

largestObject retrieves the largest detected object from the objects array.

This method can be used to always get the largest object from objects without specifying an index.

AIVisionSensor.largestObject

成分 |

描述 |

|---|---|

|

您的AI视觉传感器实例的名称。 |

示例

// Display the closest AprilTag's ID

while (true) {

Brain.Screen.setCursor(1, 1);

Brain.Screen.clearLine(1);

AIVision1.takeSnapshot(aivision::ALL_TAGS);

if (AIVision1.objects[0].exists) {

Brain.Screen.print("%d", AIVision1.largestObject.id);

}

wait(50, msec);

}

objects#

objects returns an array of detected object properties. Use the array to access specific property values of individual objects.

There are ten properties that are included with each object stored in the objects array after takeSnapshot is used.

Some property values are based off of the detected object’s position in the AI Vision Sensor’s view at the time that takeSnapshot was used. The AI Vision Sensor has a resolution of 320 by 240 pixels.

AIVisionSensor.objects[index].property

成分 |

描述 |

|---|---|

|

您的AI视觉传感器实例的名称。 |

|

The object index in the array. Index begins at |

|

可用的对象属性。 |

.存在#

指示指定的对象索引是否包含有效的检测到的对象。

访问

SensorName.objects[index].exists

返回值

返回一个布尔值,指示指定的对象索引是否包含有效的检测到的对象:

-

true— A valid object exists at the specified index. -

false— No object exists at the specified index.

示例

// Move forward if an object is detected

while (true) {

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

Drivetrain.driveFor(forward, 50, mm);

}

wait(50, msec);

}

。宽度#

返回检测到的对象的宽度。

访问

SensorName.objects[index].width

返回值

Returns an int16_t representing the width of the detected object in pixels. The value ranges from 1 to 320.

示例

// Approach an object until it's at least 100 pixels wide

while (true) {

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

if (AIVision1.objects[0].width < 100) {

Drivetrain.drive(forward);

} else {

Drivetrain.stop();

}

} else {

Drivetrain.stop();

}

wait(50, msec);

}

。高度#

返回检测到的物体的高度。

访问

SensorName.objects[index].height

返回值

Returns an int16_t representing the height of the detected object in pixels. The value ranges from 1 to 240.

示例

// Approach an object until it's at least 90 pixels tall

while (true) {

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

if (AIVision1.objects[0].height < 90) {

Drivetrain.drive(forward);

} else {

Drivetrain.stop();

}

} else {

Drivetrain.stop();

}

wait(50, msec);

}

.centerX#

返回检测到的物体中心的 x 坐标。

访问

SensorName.objects[index].centerX

返回值

Returns an int16_t representing the x-coordinate of the object’s center in pixels. The value ranges from 0 to 320.

示例

// Turn until an object is directly in front of the sensor

Drivetrain.setTurnVelocity(10, percent);

Drivetrain.turn(right);

while (true) {

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

if (AIVision1.objects[0].centerX > 140 && AIVision1.objects[0].centerX < 180) {

Drivetrain.stop();

}

}

wait(10, msec);

}

.centerY#

返回检测到的物体中心的 y 坐标。

访问

SensorName.objects[index].centerY

返回值

Returns an int16_t representing the y-coordinate of the object’s center in pixels. The value ranges from 0 to 240.

示例

// Approach an object until it's close to the sensor

while (true) {

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

if (AIVision1.objects[0].centerY < 150) {

Drivetrain.drive(forward);

} else {

Drivetrain.stop();

}

} else {

Drivetrain.stop();

}

wait(50, msec);

}

。角度#

返回检测到的颜色代码或 AprilTag ID 的方向。

访问

SensorName.objects[index].angle

返回值

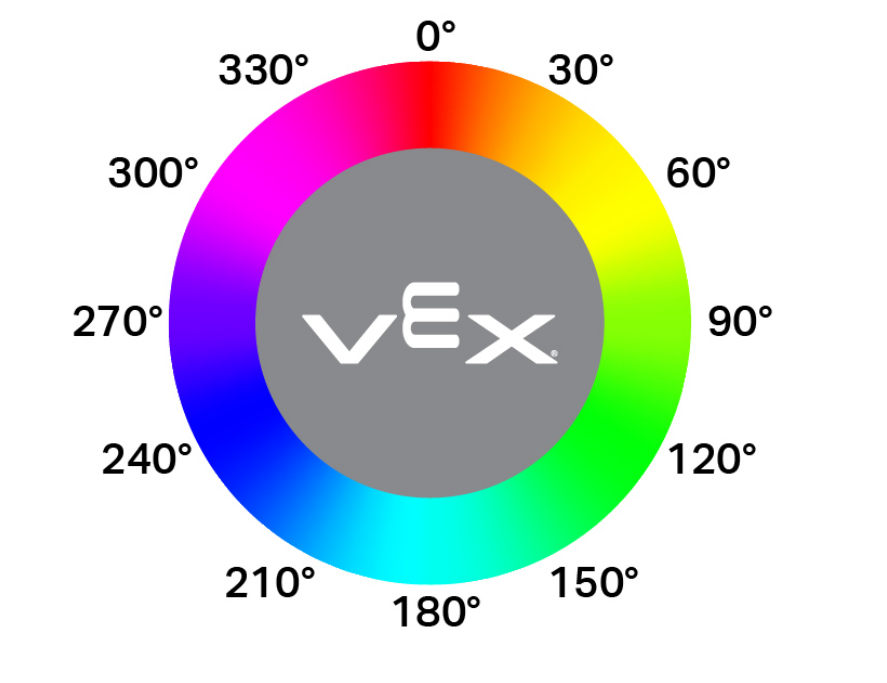

Returns a float representing the rotation of the detected Color Code or AprilTag ID in degrees. The value ranges from 0 to 360.

示例

// Turn left or right depending on how a configured

// Color Code is rotated

while (true) {

AIVision1.takeSnapshot(AIVision1__redBlue);

if (AIVision1.objects[0].exists) {

if (AIVision1.objects[0].angle > 50 && AIVision1.objects[0].angle < 100) {

Drivetrain.turn(right);

}

else if (AIVision1.objects[0].angle > 270 && AIVision1.objects[0].angle < 330) {

Drivetrain.turn(left);

}

else {

Drivetrain.stop();

}

} else {

Drivetrain.stop();

}

wait(50, msec);

}

.originX#

返回检测到的对象边界框左上角的 x 坐标。

访问

SensorName.objects[index].originX

返回值

Returns an int16_t representing the x-coordinate of the object’s bounding box origin in pixels. The value ranges from 0 to 320.

示例

// Display if an object is to the left or the right

while (true) {

Brain.Screen.clearScreen();

Brain.Screen.setCursor(1, 1);

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

if (AIVision1.objects[0].originX < 120) {

Brain.Screen.print("To the left!");

} else {

Brain.Screen.print("To the right!");

}

} else {

Brain.Screen.print("No objects");

}

wait(100, msec);

}

.originY#

返回检测到的对象边界框左上角的 y 坐标。

访问

SensorName.objects[index].originY

返回值

Returns an int16_t representing the y-coordinate of the object’s bounding box origin in pixels. The value ranges from 0 to 240.

示例

// Display if an object is close or far

while (true) {

Brain.Screen.clearScreen();

Brain.Screen.setCursor(1, 1);

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

if (AIVision1.objects[0].originY < 110) {

Brain.Screen.print("Close");

} else {

Brain.Screen.print("Far");

}

}

wait(100, msec);

}

。ID#

返回 AprilTag ID 或 AI 分类的 ID。

访问

SensorName.objects[index].id

返回值

Returns an int32_t representing the ID of the detected object:

示例

// Move forward when AprilTag ID 1 is detected

while (true) {

AIVision1.takeSnapshot(aivision::ALL_TAGS);

if (AIVision1.objects[0].exists) {

if (AIVision1.objects[0].id == 1) {

Drivetrain.drive(forward);

}

} else {

Drivetrain.stop();

}

wait(50, msec);

}

。分数#

返回检测到的 AI 分类的置信度得分。

访问

SensorName.objects[index].score

返回值

Returns an int16_t indicating the confidence score of the detected AI Classification between 1 and 100.

示例

// Display if a score is confident

while (true) {

AIVision1.takeSnapshot(aivision::ALL_AIOBJS);

if (AIVision1.objects[0].exists) {

Brain.screen.clearScreen();

Brain.screen.setCursor(1, 1);

if (AIVision1.objects[0].score > 95) {

Brain.Screen.print("Confident");

} else {

Brain.Screen.print("Not confident");

}

}

wait(50, msec);

}